Quantum computing benchmarking

Most quantum benchmarks report hardware specs and small tests. IonQ's framework measures whether a quantum system solves real computational problems accurately, against a verified quality standard.

Results are standardized, publicly reproducible, and compared across systems on equal terms. For easy reproducibility on different Quantum Computing platforms, benchmarks, and their code are publicly available at the link below.

Core benchmark principles

Inspired by leading AI benchmarking frameworks like MLPerf and DAWNBench, our benchmark framework evaluates the complete technical stack and makes comparisons that are meaningful to the people and businesses that rely on it.

Full system stack evaluation

Each benchmark evaluates the complete system: hardware, compiler, runtime, and all performance management layers (error mitigation, error correction, and circuit optimizations). Component-level metrics matter, but they do not capture the trade-offs that come with every architectural decision. Higher gate fidelity can mean slower gate speed. Error correction consumes physical qubits and increases circuit depth. Application benchmarks measure how the entire system performs together on a real problem.

Reproducible and transparent

This framework follows MLPerf’s structure, with two categories of benchmarks. Closed benchmarks fix the implementation so results are directly comparable across platforms and vendors (the code is “closed” to modifications). Open benchmarks fix the success criterion but allow algorithmic innovation, so teams can show their best results without disclosing proprietary methods (it is “open” to new code). Both the quantum and classical code for “closed” benchmarks is available in Qiskit on GitHub for independent verification.

Measuring time and quality

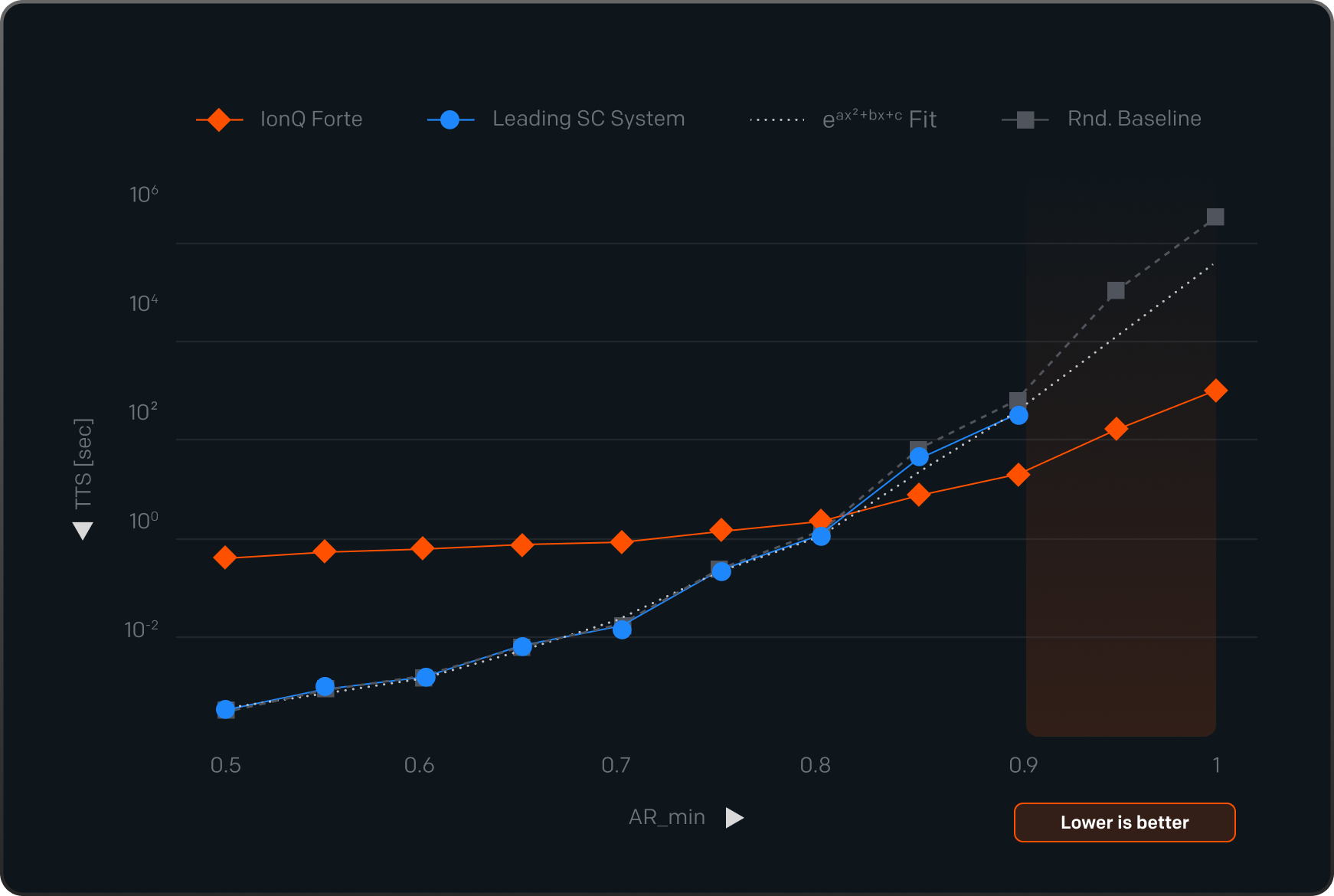

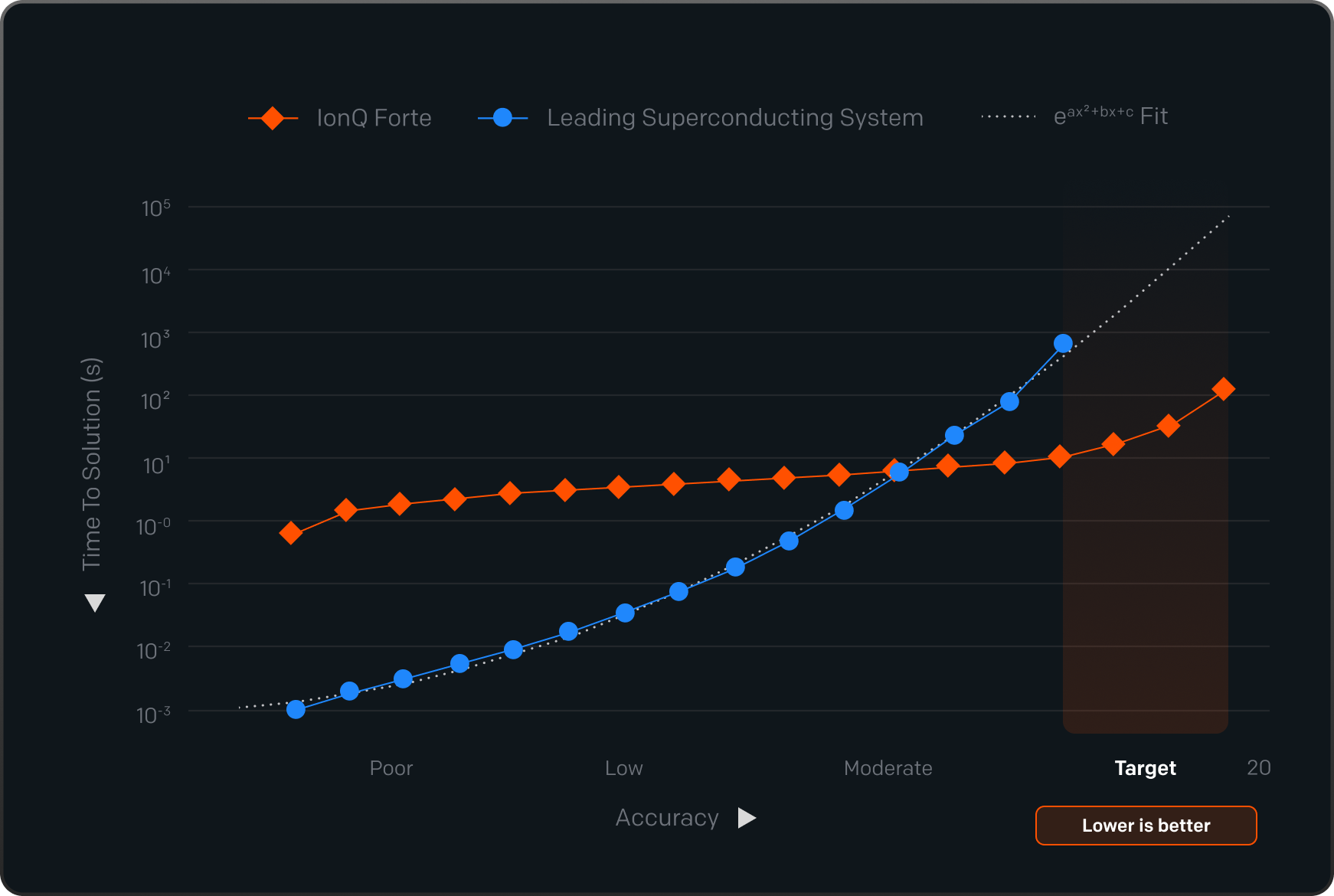

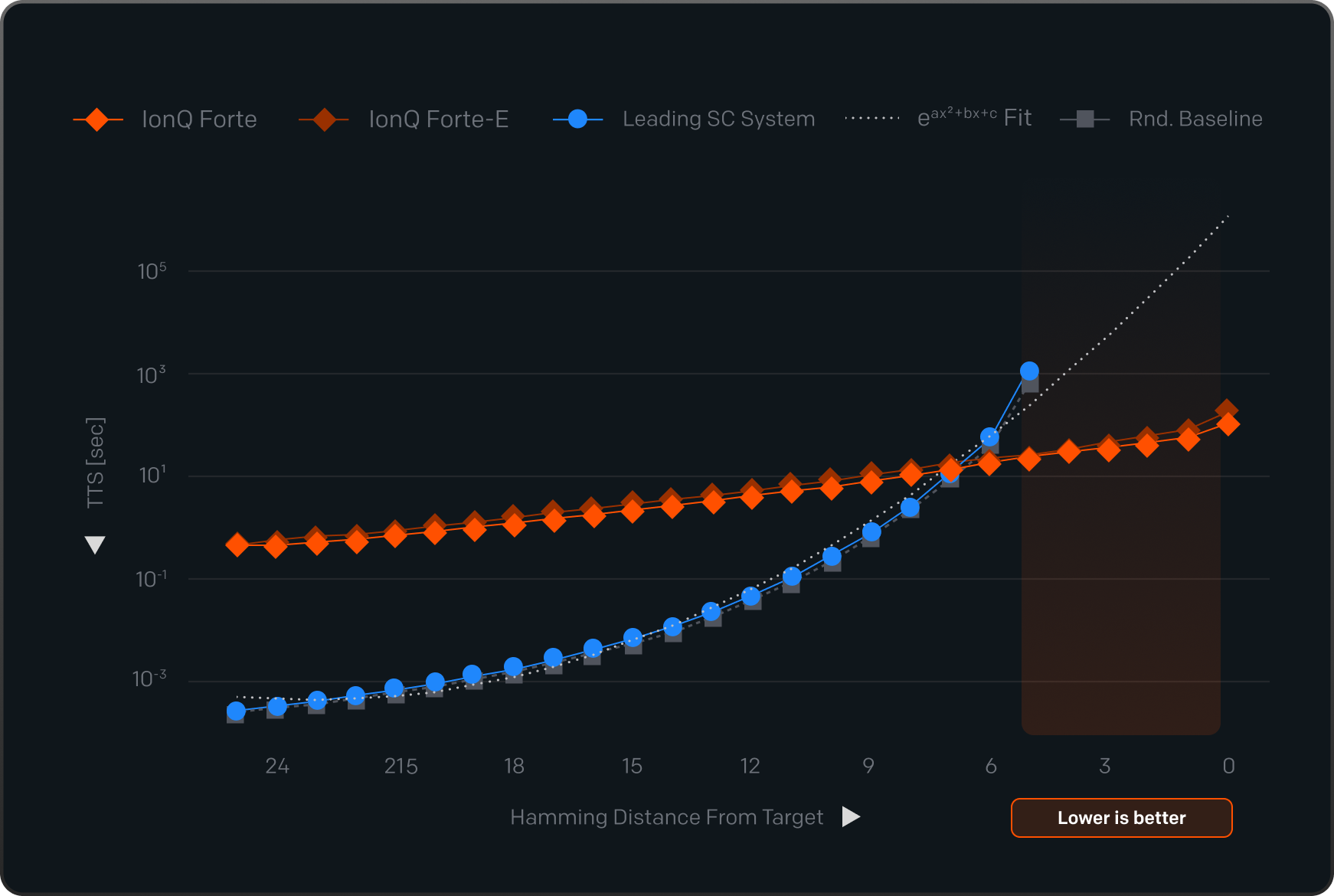

Every benchmark in this suite reports a solution quality score as a metric. When measuring quality, all answers are accepted and critically compared to results from other systems. When measuring Time-to-Solution, an answer only counts if it meets a predefined accuracy threshold, and time measurement continues until the correct answer is provided (which could also be unattainable - infinite TTS). Energy-to-Solution (ETS) and Cost-to-Solution (CTS) are the outcomes of each benchmark and can be compared head-to-head across systems.

How performance is scored

Most quantum benchmarks today report hardware specs: qubit count, gate fidelity, gate speed, and error rates. Our approach tells you what a system is actually capable of in terms of the quality and time-to-solution of relevant, real-world computational problems.

Every benchmark in this suite is scored on quality. When measuring Time-to-Solution (TTS), what counts as a correct answer is defined before any system runs. An answer that misses the bar does not count, and time measurement continues.

TTS benchmarks measure the total wall time from job submission to a verified, qualifying answer. This covers the full pipeline from compilation through post-processing. Both types require a great underlying system. The difference is whether we measure time or quality as the output.

Results are reported per workload. A single aggregated benchmarking score cannot represent performance across problems that differ in structure, depth, and required accuracy.

TTS benchmarks measure the total wall time from job submission to a verified, qualifying answer. This covers the full pipeline from compilation through post-processing. Both types require a great underlying system. The difference is whether we measure time or quality as the output.

Results are reported per workload. A single aggregated benchmarking score cannot represent performance across problems that differ in structure, depth, and required accuracy.

Solution Quality

Each benchmark defines upfront what a correct or ideal/optimal answer looks like. For a chemistry simulation, the result has to land within a precise energy range of the known correct molecular energy. For an optimization problem, the solution has to come within a defined percentage of the theoretical best. For a machine learning task, the model has to hit a minimum accuracy threshold. The bar is set before any system runs. A result that misses it is not counted, regardless of how fast it was produced.

Time-to-Solution

The clock starts at job submission and stops when a verified, qualifying answer is returned, or the last shot is completed. Compilation, quantum execution, error mitigation, and all classical co-processing count toward the total. Because many benchmarks in this suite involve hybrid quantum-classical workflows, classical co-processing time is included by design. TTS is reported for the five benchmarks in which the problem structure supports a meaningful, reproducible time comparison across systems.

Real-world results:

IonQ vs peers

The quantum benchmark suite

Application-level benchmarks across optimization, quantum chemistry, machine learning, data loading, simulation, and other foundational subroutines.

This benchmarking suite was designed to allow for new benchmark challenges to be proposed and launched as new application domains emerge, and existing problems can be modified or retired as hardware capabilities and relevant business problems shift.

Each benchmark’s code, which is designed to be fixed (the “closed” category), is publicly available for independent verification, while benchmarks in the “open” category are open for innovation, and other companies can come up with novel ways to address the benchmark challenge in the best way possible.

Each benchmark’s code, which is designed to be fixed (the “closed” category), is publicly available for independent verification, while benchmarks in the “open” category are open for innovation, and other companies can come up with novel ways to address the benchmark challenge in the best way possible.

Thank you! Your submission has been received!

Oops! Something went wrong while submitting the form.

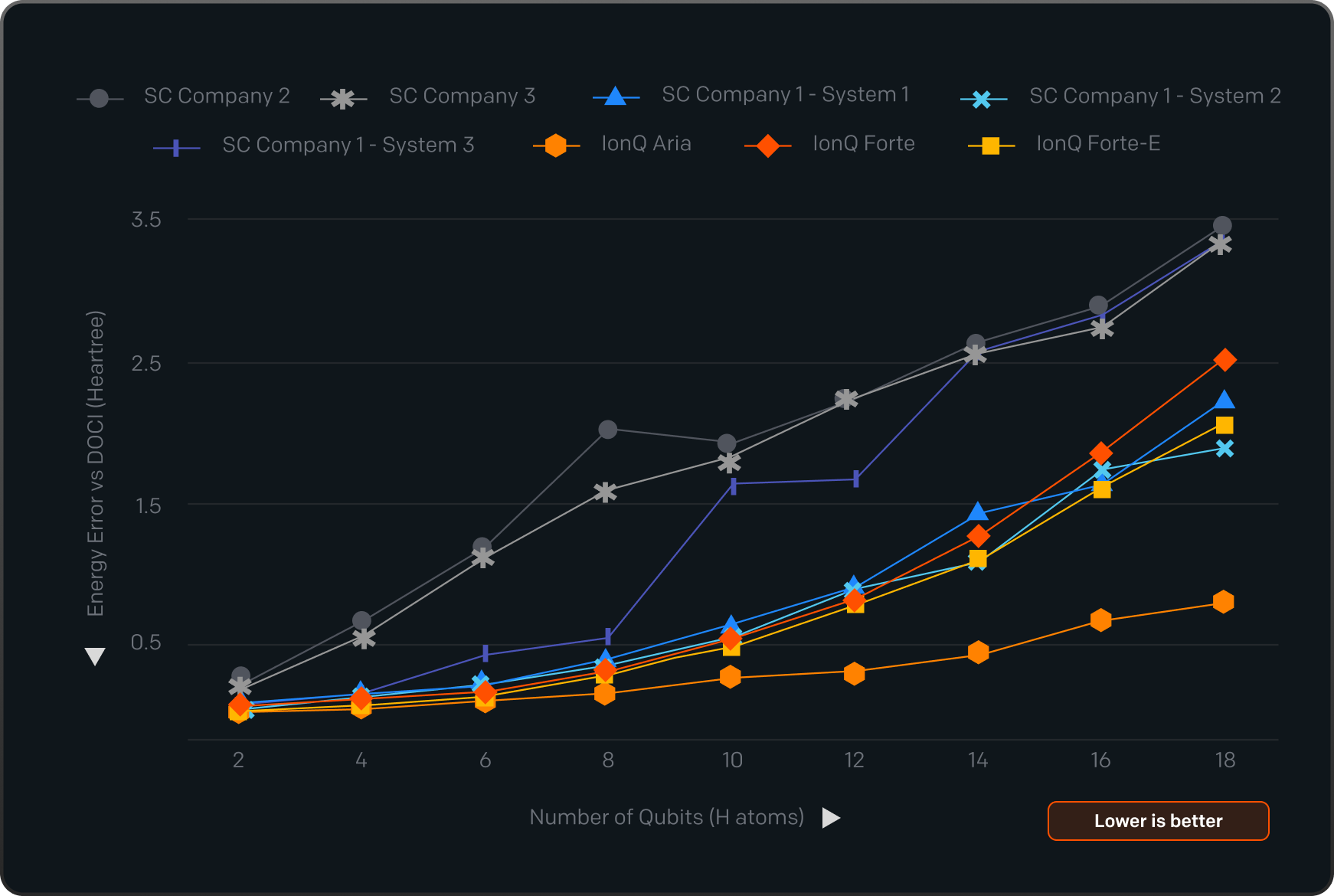

Quantum Chemistry

VQE with UpCCD

Quality

Variational Quantum Eigensolver

This benchmark asks the quantum system to compute the lowest energy state of a molecule. The correct answer is already known from classical calculation. That makes it a clean test: run the quantum circuit, compare the result to ground truth, and the gap tells you exactly how much noise the hardware introduced.

Pharmaceuticals

Materials

Energy

Example Use Cases:

.svg.png)

Catalyst design for strongly correlated electron systems

.svg.png)

Battery material discovery and drug development

Optimization

QAOA

Quality

Quantum Approximate Optimization Algorithm

This tests how well a quantum system finds near-optimal solutions to combinatorial problems. This is the class of problems that appears throughout routing, scheduling, and resource allocation. Algorithm parameters are fixed in advance, so any difference in results between systems reflects the hardware, not the software choices made around it.

Finance

Logistics

Manufacturing

Example Use Cases:

.svg.png)

Semiconductor chip design and layout optimization

.svg.png)

Financial network community detection

Optimization

LR-QAOA

Quality

TTS

Linear-Ramp QAOA

This is a version of the optimization benchmark QAOA where nothing can be tuned. The full algorithm is specified before the circuit runs. Because there are no adjustable parameters, no system can get a better score by optimizing around its hardware's weaknesses. What you see is what the system can do.

Finance

Logistics

Manufacturing

Example Use Cases:

.svg.png)

Logistics routing and hub-and-spoke network optimization

.svg.png)

Supply chain scheduling and resource allocation

Optimization

varQITE

Quality

Variational Quantum Imaginary Time Evolution

An alternative quantum approach to optimization that runs once, with no iterative classical feedback. The circuit is pre-determined and runs to completion. This removes the classical overhead that makes variational algorithms difficult to time and compare, revealing what the hardware itself contributes to solution quality.

Finance

Logistics

Manufacturing

Example Use Cases:

.svg.png)

Portfolio construction and asset allocation

.svg.png)

Supply chain and operations research

Foundational Subroutines

FAA

Quality

Fixed Point Amplitude Amplification

Quantum computers are expected to search large solution spaces faster than classical alternatives. This benchmark tests that ability under realistic conditions: you do not know in advance how many correct answers exist. That constraint matters because it reflects how real-world search problems work and breaks most standard quantum search algorithms.

Technology

AI/ML

Security

Example Use Cases:

.svg.png)

Search over large, noisy decision graphs

.svg.png)

Molecular dynamics for drug discovery and synthetic biology

Machine Learning

qCNN

Quality

Quantum Convolutional Neural Network

This benchmark runs a pre-trained quantum neural network on image classification and scores both accuracy and inference speed. The system is tested on whether it delivers correct, consistent results without recalibration between runs.

Healthcare

Security

AI/ML

Example Use Cases:

.svg.png)

Tissue classification for digital pathology

.svg.png)

Anomaly detection in security image datasets

Machine Learning

Quantum Copula

Quality

Portfolio Risk Analysis

This benchmark trains a quantum circuit to understand how a group of assets tends to move together, then uses that model to predict portfolio risk. Those risk predictions are scored against actual historical market data, not a simulation. It is one of the few quantum benchmarks grounded in real financial outcomes.

Finance

Insurance

Investment Banking

Example Use Cases:

.svg.png)

Portfolio risk modeling and reserve optimization

.svg.png)

Risk-weighted asset allocation

Data Loading

Tensor Network Circuits

Quality

Image Loading via MPS

Before a quantum computer can process classical data, that data has to be loaded into the system without significant loss. This benchmark tests how accurately a system can do that with image data, across two datasets, as the encoding process becomes more complex. Noise accumulates with complexity. The score reveals where and how fast that accumulation happens.

AI/ML

Healthcare

Manufacturing

Example Use Cases:

.svg.png)

Manufacturing defect via image analysis

.svg.png)

Medical imaging diagnostics and enhancement

Foundational Subroutines

QFT

Quality

TTS

Quantum Fourier Transform Suite

The Quantum Fourier Transform is central to some of quantum computing's most important algorithms, including those for cryptography and chemistry. The problem is that existing QFT benchmarks are often trivially simplified by modern compilers, producing scores that do not reflect real-world execution. This suite closes that gap: each variant is specifically designed so that the full circuit must execute.

Cryptography

Security

Metrology

Example Use Cases:

.svg.png)

RSA decryption using Shor’s algorithm

.svg.png)

Quantum signal processing and metrology

Foundational Subroutines

HSBP

Quality

TTS

Hidden Shift Benchmark Problem

A stress test where the difficulty scales continuously, making it possible to find exactly where a system's performance starts to degrade. Rather than a single pass or fail, this benchmark maps out a system's capability curve. That information is more useful for hardware comparison than any single number.

Cryptography

Security

Research

Example Use Cases:

.svg.png)

Hardware characterization for cryptographic workloads

.svg.png)

Gate performance validation at scale

High Energy Physics

Quality

TTS

Neutrinoless Double Beta Decay Simulation

This is a physics simulation run at the edge of what current hardware can execute. Noise accumulating across hundreds of operations is the dominant challenge at this scale. The benchmark asks whether the hardware can still recover a statistically meaningful signal from that level of complexity, a direct test of what present-day systems can do at scale.

Research

Defense

National Labs

Example Use Cases:

.svg.png)

Nuclear physics simulation beyond Standard Model constraints

.svg.png)

Many-body quantum dynamics research

Quantum Chemistry

QC-AFQMC

Quality

Quantum-Classical Auxiliary Field Quantum Monte Carlo

The quantum computer prepares an initial state that gives a classical simulation a better starting point, allowing it to compute molecular energies more accurately than it could on its own. The hybrid workflow is timed from start to finish. The score is whether the combined system reaches a chemically accurate answer, and how long that takes.

Pharmaceuticals

Materials Science

Energy

Example Use Cases:

.svg.png)

Drug discovery and protein-ligand binding

.svg.png)

High-temperature superconductor research

Simulation

QLBM

Quality

Quantum Lattice Boltzmann Method

This simulates how a fluid evolves, then checks at each step whether the result matches the known classical solution. Running the simulation forward through multiple iterations tests whether the hardware can sustain accuracy over a full sequence, not just on a single circuit.

Engineering

Climate

Materials Science

Example Use Cases:

.svg.png)

Fluid dynamics simulation for aerospace and automotive engineering

.svg.png)

Climate and atmospheric modeling

Behind the

benchmark:

How IonQ designed its quantum performance framework

Most quantum benchmarks today report hardware specs: qubit count, gate fidelity, gate speed, and error rates. Our approach tells you what a system is actually capable of in terms of the quality and time-to-solution of relevant, real-world computational problems.

Read the full

white paper

Our benchmarking framework prioritizes fairness, transparency, and real-world relevance. It's designed to evolve alongside the technology it measures. Read the full white paper to learn how we define problem size, solution quality thresholds, TTS, and more, to holistically benchmark a quantum computer.